Librarian: Local AI Document Assistant

Librarian is a private AI assistant that helps you search, summarize, and reason across documents and code — without relying on cloud uploads. It combines a vector search index with an agentic RAG workflow to produce answers that are grounded, higher-coverage, and easier to trust.

At a glance

- Agentic RAG: iterative retrieval steps that improve coverage and completeness

- Model flexibility: use Ollama and pick the right model for the job

- LAN or Offline: share safely inside your network, or isolate completely

- Built for real libraries: works as your documents and repositories grow

Document & Code Intelligence

Two core capabilities — both grounded in retrieval so your answers stay connected to your actual files.

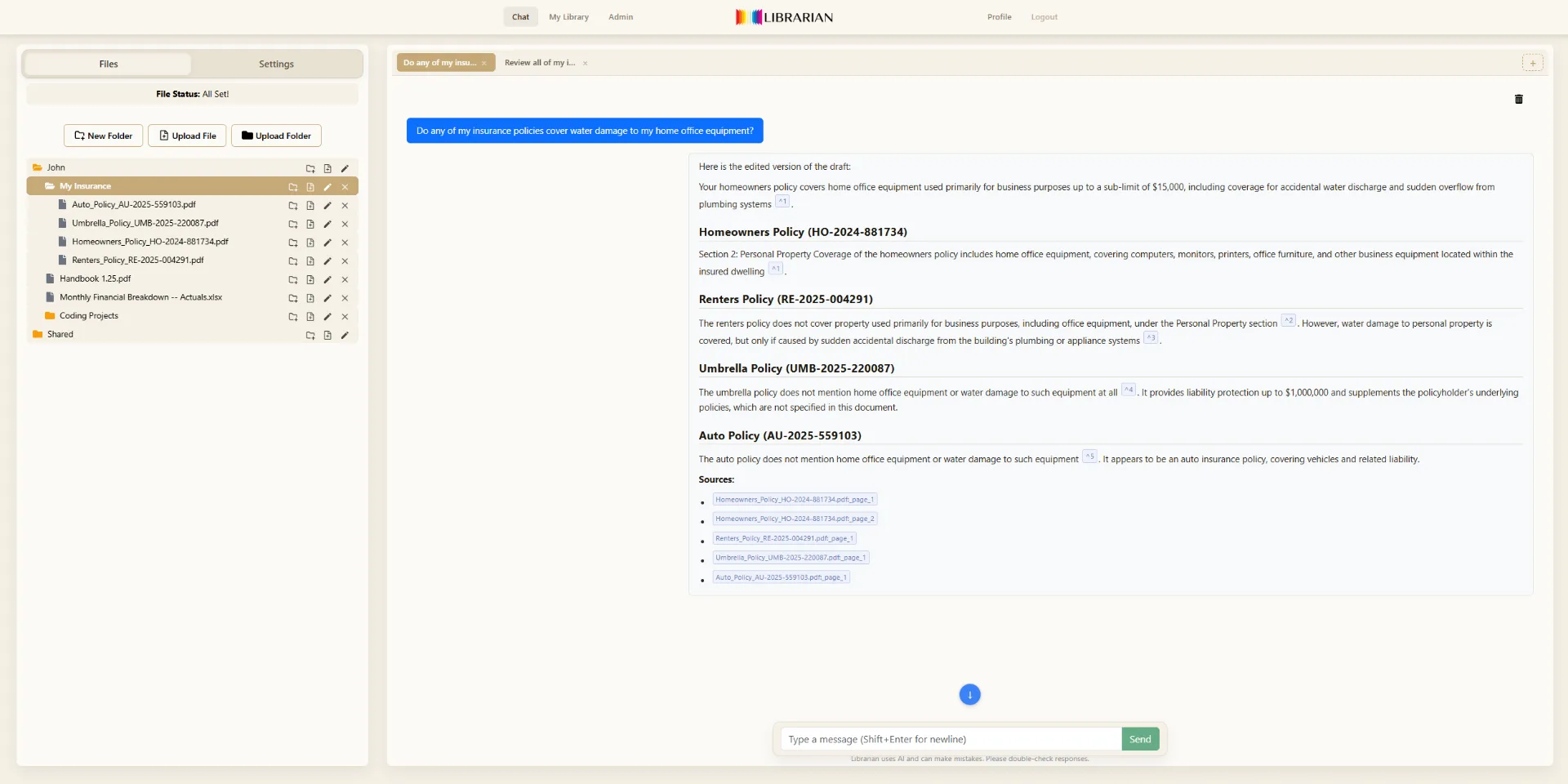

Document Intelligence

Ask questions and extract information using an AI workflow that retrieves context, checks coverage, and refines answers over multiple steps.

Supported formats

- PDF (.pdf)

- Excel (.xlsx, .xls)

- CSV (.csv)

- Plain text (.txt)

- Markdown (.md)

Code Intelligence

Index codebases and design docs. Ask questions across repositories, debug issues, and explore unfamiliar projects with context-aware retrieval.

Supported languages

- Python

- JavaScript / TypeScript

- C# / .NET

- Java

- Rust

- Go

- C / C++

- And 15+ more

Model sizing tip: 8B-class models are great for PDF reading, summarization, and Q&A. For serious coding help — multi-file debugging, refactors, and reliable fixes — you'll want a larger model (30B+). 70B-class models can feel dramatically stronger. Librarian supports model switching so you can scale capability when a task demands it.

How Agentic RAG Works

Unlike simple RAG that retrieves once and responds, Agentic RAG takes multiple retrieval steps to build a more complete answer.

The agentic loop breaks complex questions into sub-queries, retrieves evidence from different parts of your documents, checks for gaps, and synthesizes a complete answer — reducing missed details and hallucinations compared to single-pass RAG.

Your Data Never Leaves Your Environment

Offline Mode

Localhost — single machine, zero network

Librarian is reachable only from the machine it runs on. No other devices can connect, even on the same Wi-Fi. This is the simplest isolation model — great for travel, sensitive work, or maximum separation.

LAN Mode

Private network — shared with trusted devices

Turn a trusted computer into an LLM server for your home or small office. Approved devices on your local network connect directly. Built-in device trust management lets you control who has access.

Privacy-first by design: Librarian can run without internet access. Your documents and indexes stay in your environment, and LAN access is designed to remain private — helping prevent accidental exposure and data leakage outside your network.

Hardware & Model Sizing

Librarian runs inference, retrieval, vectorization, and storage using your CPU/GPU/RAM. Stronger hardware means faster responses and larger models.

| Model | Size | VRAM (INT8) | Typical GPU | Storage | Notes |

|---|---|---|---|---|---|

| LLaMA 3.1: 8B | 8 billion | ~9 GB | 1× RTX 3090 (24 GB) | ~15–20 GB | Good starting point; quantization helps on consumer hardware. |

| LLaMA 3.1: 70B | 70 billion | ~73 GB | Multi-GPU (e.g., several 4090s) | ~140–160 GB | High capability, but heavy; best with strong GPUs. |

| LLaMA 3.1: 405B | 405 billion | Distributed | Multi-node cluster | ~780 GB | Enterprise-scale; not for home/small office use. |

Practical recommendation: Start with smaller models (8B) for document workflows, then scale up to 30B+ when you want serious coding help. Librarian lets you switch models at any time — choose the right tool for each task.

Will it run on my PC? If you can run Ollama and pull an 8B model, you can use Librarian for document Q&A. Test it yourself: install Ollama, run ollama pull llama3.1, and try a prompt. If that works, Librarian will too.

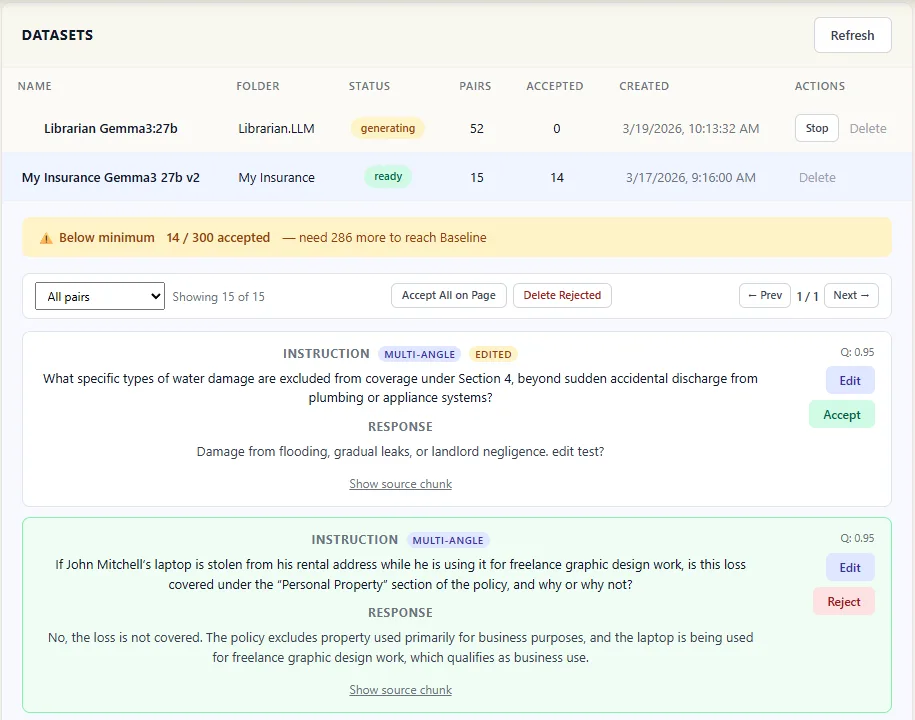

FORGE: Build an AI That Knows Your Domain

Generic AI reads your documents as plain text. FORGE creates a custom model that understands your domain — it learns your terminology, your document structure, and the relationships that matter in your field. No machine learning expertise required. No data leaves your machine.

Ready to Try Librarian?

Start with a free 30-day trial. Full Agentic RAG. No credit card. No cloud account.